Detailed Description

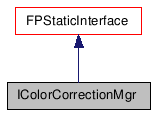

This interface manages the correction that can be made to the colors.

The differences between IColorCorrectionMgr and GammaManager is the number of channels corrected and the correction types (Autodesk LUT, gamma). IColorCorrectionMgr manages 3 channels, so that all Autodesk LUTs can be loaded. GammaManager can still be used to change or access the display gamma value or to apply gamma correction on a color BUT it is now highly recommended to use IColorCorrectionMgr. Only the use of IColorCorrectionMgr will provide the use of Autodesk LUTs. Here is a short description of how this class may be used:

- First, it manages the color correction mode which can be one of the following: kLUT, kGAMMA, kNONE

- The color correction mode can be modified/accessed by GetColorCorrectionMode/SetColorCorrectionMode methods (see description).

- The Autodesk LUT can be modified/accessed by GetLUT/SetLUT .

- The display Gamma value can be modified/accessed by GetGamma/SetGamma.

#include <IColorCorrectionMgr.h>

Public Types |

|

| enum | CorrectionMode { kLUT, kGAMMA, kNONE } |

|

Describes the color correction mode. More... |

|

| enum | ColorChannel { kRED_C, kGREEN_C, kBLUE_C } |

Public Member Functions |

|

| virtual CoreExport BOOL | SetLUT (const MCHAR *file)=0 |

| This method reads and parses the LUT

associated with the file name. |

|

| virtual CoreExport MCHAR * | GetLUT () const =0 |

| Returns the name of the current LUT file.

|

|

| virtual CoreExport void | SetColorCorrectionMode (const CorrectionMode mode)=0 |

| This method sets the current color

correction mode. |

|

| virtual CoreExport CorrectionMode | GetColorCorrectionMode () const =0 |

| This method returns the current color

correction mode. |

|

| virtual CoreExport void | SetColorCorrectionPrefMode (const CorrectionMode mode)=0 |

| This method sets the preferred mode used

when color correction is enabled. |

|

| virtual CoreExport CorrectionMode | GetColorCorrectionPrefMode () const =0 |

| This method returns the preferred mode used

when color correction is enabled. |

|

| virtual UBYTE | ColorCorrect16 (const UWORD b, ColorChannel c) const =0 |

| Correct 8 bit color value. |

|

| virtual UBYTE | ColorCorrect8 (const UBYTE b, ColorChannel c) const =0 |

| Correct 8 bit color value. |

|

| virtual COLORREF | ColorCorrect8RGB (const DWORD col) const =0 |

| Correct RGB color. All 3 channels (kRED_C,

kGREEN_C or kBLUE_C) are corrected. |

|

| virtual CoreExport void | SetGamma (float newGamma)=0 |

| This method sets the display gamma

correction value to a new value. |

|

| virtual CoreExport float | GetGamma () const =0 |

| This method returns the display gamma

correction value currently stored. |

|

Member Enumeration Documentation

| enum CorrectionMode |

Describes the color correction mode.

Only one correction mode can be active at any moment

| enum ColorChannel |

Member Function Documentation

| virtual CoreExport BOOL SetLUT | ( | const MCHAR * | file | ) | [pure virtual] |

This method reads and parses the LUT associated with the file name.

This method can be called in ANY color correction mode, and this action will NOT change the color correction mode. When the color correction mode will be kLUT, the last LUT set here will be the one used.

- Parameters:

-

[in] file - new LUT file name

- Returns:

- - false if there was an error reading or parsing the file

| virtual CoreExport MCHAR* GetLUT | ( | ) | const [pure virtual] |

Returns the name of the current LUT file.

This method can be called in ANY color correction mode, and this action will NOT change the color correction mode. This will return the current LUT file name in any color correction mode.

- Returns:

- file - the current LUT file name

| virtual CoreExport void SetColorCorrectionMode | ( | const CorrectionMode | mode | ) | [pure virtual] |

This method sets the current color correction mode.

- Parameters:

-

[in] mode - the new color correction mode ( kGAMMA, kLUT or kNONE)

| virtual CoreExport CorrectionMode GetColorCorrectionMode | ( | ) | const [pure virtual] |

This method returns the current color correction mode.

- Returns:

- - the current color correction mode ( kGAMMA, kLUT or kNONE)

| virtual CoreExport void SetColorCorrectionPrefMode | ( | const CorrectionMode | mode | ) | [pure virtual] |

This method sets the preferred mode used when color correction is enabled.

This sets the UI default but does not change the currently active mode.

- Parameters:

-

[in] mode - the preferred mode used when color correction is enabled (kGAMMA or kLUT)

| virtual CoreExport CorrectionMode GetColorCorrectionPrefMode | ( | ) | const [pure virtual] |

This method returns the preferred mode used when color correction is enabled.

This returns the UI default, ordinarily the most recent enabled mode (kGAMMA or kLUT) selected by the user.

- Returns:

- - the preferred mode used when color correction is enabled (kGAMMA or kLUT)

| virtual UBYTE ColorCorrect16 | ( | const UWORD | b, |

| ColorChannel | c | ||

| ) | const [pure virtual] |

Correct 8 bit color value.

If the color correction mode is set to kNONE, then the color itself is returned.

- Parameters:

-

[in] b - value of the color to correct in 16 bits [in] c - indicates the channel corresponding to the value to be corrected (kRED_C, kGREEN_C or kBLUE_C)

- Returns:

- - corrected value in 8 bits

| virtual UBYTE ColorCorrect8 | ( | const UBYTE | b, |

| ColorChannel | c | ||

| ) | const [pure virtual] |

Correct 8 bit color value.

If the color correction mode is set to kNONE, then the color itself is returned.

- Parameters:

-

[in] b - value of the color to correct in 8 bits [in] c - indicates the channel corresponding to the value to be corrected (kRED_C, kGREEN_C or kBLUE_C)

- Returns:

- - corrected value in 8 bits

| virtual COLORREF ColorCorrect8RGB | ( | const DWORD | col | ) | const [pure virtual] |

Correct RGB color. All 3 channels (kRED_C, kGREEN_C or kBLUE_C) are corrected.

If the color correction mode is set to kNONE, then the color itself is returned.

- Parameters:

-

[in] col - color to correct

- Returns:

- - corrected value of the given color

| virtual CoreExport void SetGamma | ( | float | newGamma | ) | [pure virtual] |

This method sets the display gamma correction value to a new value.

This method can be called in ANY color correction mode, and this action will NOT change the color correction mode. This new gamma value will equally be applied to each of the three channels The gamma value must be a float value between 0.01 and 5, if the newGamma value isn't the resulting value with still be in this range

- Parameters:

-

[in] newGamma - The new gamma value

| virtual CoreExport float GetGamma | ( | ) | const [pure virtual] |

This method returns the display gamma correction value currently stored.

This method can be called in ANY color correction mode, and this action will NOT change the color correction mode. The returned gamma value is the one equally applied to each of the three channels

- Returns:

- - current display gamma correction value (a value between 0.01 and 5)